|

English | ||||||||||||||||||||||||

| Home page | Services | Past achievements | Contact | Site map |

|||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Page d'accueil | Services | Réalisations précédentes | Contact | ||||||||||||||||||||||

| Français | |||||||||||||||||||||||||

| |||||||||||||||||||||||||

|

Starting from the adage that energy is the common currency of the universe, it follows that all types of engineering are equal, and that all forms of energy are equally applicable as a medium for conducting computation. The fact that we have come to think of computers as being digital and electronic is merely an accident of history: digital electronics is merely the current technology that we happen to have perfected for this purpose. This page summarises several fields of non-conventional computing, as viewed from the perspective of a computer engineer. References are made to articles in the New Scientist magazine, since this is a useful source of initial information before turning towards the more academic sources. A flow of electrons can be used to carry energy (electrical engineering), or to carry information (electronic engineering) with each bit requiring a certain minimum amount of energy to carry it, E>kT.ln(2). In the same way, a flow of photons, from a laser, can be used directly to carry energy (for cutting and welding, for example) or to carry information (in fibre optics). Similarly for mechanical energy, wheels and levers can be used to carry kinetic energy (for a car, or a power-tool), or information (as in a Babbage Analytic Engine); meanwhile water wheels can be used extract the energy carried by a flow of molecules while hydraulic logic uses that flow to carry information; and similarly for wind and gas turbines versus pneumatic logic. Computer engineering, like most of engineering in general, is made tractable by our ability to abstract away from the underlying complexity of physical devices, and our ability to take their overall, external behaviour on trust, without having constantly to worry what is going on inside the black-box. Thus, a transistor is treated as a digital switch in a potential divider chain, without worrying about what the quantum mechanics that allows it to function this way. Similarly, the NOR gate (or NAND gate) is treated as a univeral building block in digital electronics, without worrying about the switches and potential divider chains inside, and these can be implementented for any of the above energy forms, not just digital electronic.

Any computers constructed from conventional computer engineering schematics (for example, using digital logic gates) even non-electronic ones, are still equivalent to a Universal Turing Machine (UTM), and thus bound by the same limitations of the Turing Halting Problem (THP). A more exotic goal, therefore, is to construct computers that are not UTM equivalent (NS, 19-Jul-2014, p34). There is a tempting resonance between the limitation of THP and that of Heisenberg's Uncertainty Principle (HUP). The connection between the two seems to be cemented further by research results that establish that HUP can be expressed in terms of information theory (NS, 23-Jun-2012, p8), and that, if we did manage to find out both a quantum particle's position and its momentum, Maxwell's Demon would be able to violate the second law of thermodynamics (NS, 13-Oct-2012, p32). In the case of the UTM, we cannot be certain of whether any given computer program can be guaranteed to halt; however, if it does turn out to halt, computation theory allows us to work out, with certainty, what the final state of the machine will be, including any answer that it is supposed to deliver. Perhaps this, then, is the Heisenberg-like trade-off. The proposal would be to design computers that are more likely to be guaranteed to halt, but for which we are less certain of its probabilistic final state. It could be argued that evolutionary systems and quantum computers already do this. |

For comparison of the engineering domains, consider 1 mol of particles flowing per second (6.022137x1023 particles per second).

Because of this mismatch, electronic and photonic information is handled using minute fractions of a mol of their respective particles, and the three types of mechanical information handlers (solid, liquid/hydraulic and gas/pneumatic) use many mols. Ultimately, all technologies are limited by the E>kT.ln(2) constraint of Maxwell's Demon. That is, 1 bit/s for each 2.6x10-21 W at STP. This, though, would give a computer that worked quantum-mechanically, and only produced correct answers with a given probability. In reality, even our leanest-burn computers work many orders of magnitude above this limit. |

Optical (Photonic) Computers

The ultimate aim of optical computing is to use optical switching to perform computation in the same way as electronic computers use transistors to perform electronic switching. The main benefit of doing this would be the greatly increased computing speeds.

Given, though, that electronic computing is already highly developed, and viable, the aim is being approached more by attrition than direct assault. That is, newly developed optical technology is used where it gives an advantage over doing it electronically, but conventional electronics continues to be used for the rest of the system.

As for all computer systems, the three main organs are: processing, memory, and communication. It is communication, of course, that has been most successfully converted to optics, both long distance (over fibre optic cables), and in certain applications over short distances within electronic computers.

Gradually, over the years, more and more of the processing will be performed directly in optics, without having to convert to electronics first, and then back again. At first, this will be just for the processing associated with managing the fibre optic communication (such as switching, multiplexing and routing optical signals through telephone-like exchanges), but in-roads are gradually being made deeper into the processing organ of the computer, starting with the specialist domain of signal processing.

Modulation

Communication by electromagnetic waves is not new, of course, since that is what radio transmission is. So many of the techniques for carrying signals on an optical beam have many parallels (at least in an abstract sense) with those used for radio communication. In particular, the idea of using devices to modulate the signal on to the carrier frequency, and then using devices to demodulate it, to extract the signal out again, at the other end.

In optics, a laser is used as the source of the carrier, and the modulating signal is mixed by altering some property of the medium through which the laser beam is passing. Certain materials can be made to change their refractive index, or their reflectivity, or their absorbtivity, or their polarisation, under the influence of acoustic pressure (generated by piezo-electric transducers), or by an electric field (notably liquid crystals), or by the strength of an optical signal (akin to the effect that is used for auto-adjusting sun-glasses). It is the last of these, of course, that promises to give us true photonic switching for optical computing.

Lasers

A beam of light can be considered as consisting of a stream of particles (photons). Just like a stream of electrons, or water or gas molecules, the beam carries a certain energy, which can be used to carry information. The amount of power being delivered can be increased by increasing the number of particles in the flow, and/or by increasing the energy of the individual particles. The one difference, though, is that with electrons and molecules, the mass is constant, so it is the velocity that is increased to increase the energy being carried; but with photons it is the velocity that is constant, and so it is the effective mass (as given by de Broglie's formula) that is increased (that is, it is the frequency of the wave that is increased).

Like all systems in this universe, of course, lasers obey the first law of thermodynamics, and cannot originate their energy, but merely collect it from the input, ready for dispatching again at the output.

The main property of a laser beam is that it is coherent, and behaving as though it is a single wave. This distinguishes it from other, more conventional light sources (incandescent light bulbs, gas discharge tubes and fluorescent lights) that pile their energy into huge batches of incoherent photons. It is this property of coherence that is used in signal lasers (for CD storage, and fibre optic communication).

It is usual, though not necessary, for a laser beam to be highly collimated. It is this property that tends to be used in industrial lasers, since it means that all the input energy is being channelled down to a highly confined volume. The ability to collect energy from a large volume for concentrating its output into a small volume (like collecting tributaries into a river) is attractive, but is not unique to lasers. The ability to collect energy over a long period of time for concentrating its release over an extremely short period of time, though, is made possible by the two-stroke cycle (energy-pump cycle, followed by stimulated emission cycle) of a pulse laser system.

Communications – Fibre optics

Fibre optics cables and connectors are long, passive cylinders (fibres) of graduated glass, a few microns in cross-section, but several kilometres long, to guide the optical signal, unchanged, along its length.

For input and output to the optical fibre channel, optoelectronic components are used for turning electronic signals into optical ones at one end of the optical fibre, and back again when it arrives at the other end (electronic/optic converters/transceivers).

For optical signal processing, there are optical switches and optical multiplexers, to route signals from one optical fibre to another, for example, and to provide the add/drop function of getting channels off and on the main optical highways, without needing to be converted to electronics. Similarly, there are optical amplifiers and optical modulators that can alter the optical signal, or mix two optical signals together, again without needing to be converted to electronics to achieve it.

For optical communication equipment there are optical repeaters, optical routers, optical exchanges (like telephone exchanges, but using photons instead of electrons).

Optical signal processing

This is where we leave the domain of "Optics" and enter that of "Photonics" (manipulating photons to do what an electronics engineer does with electrons).

The analogues of voltage/pressure, current, resistance and capacitance, should each be possible. However, our present methods of processing light are predominantly methods of diverting it from travelling in straight lines: refraction (in prisms and lenses), reflection (with mirrors), diffraction (through gratings), and absorption (in filters).

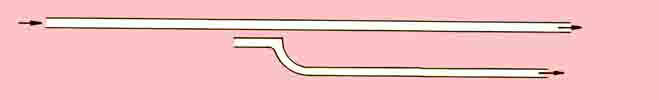

One important component is the evanescent coupler. If a beam of light is sent down a simple channel, the whole beam will emerge at the other end, provided that the channel losses are negligible. If, though, there is a section in which a second channel lies extremely close to the first, a certain proportion, n, of the energy of the original beam will cross the gap. The original beam will have been split, with a proportion, n, leaving by the second channel, and the remaining proportion, (1-n), leaving by the first.

Not only can the coupling coefficient, n, be used as a scalar multiplier for performing simple processing, but also its value can be varied. In particular, materials can be engineered for which the value of n can be varied by pressure (such as from a piezoelectric crystal) or by an electric field (such as in Lithium Niobate), each giving electronic means of supplying the input, or by the intensity of light, thereby giving an optical means of supplying the input.

Another technique involves taking a non-linear optical medium (using multi-quantum well, MQW, structures, or specially designed polymer materials), and propagating two signal beams in opposite directions. For any given angle of incidence of a third beam, the probe beam, a fourth beam is generated that gives the phase conjugate of the other three beams.

Both of these devices are capable of performing signal processing operations directly on a beam of light, without having to change it first to an electronic signal. For the former technique, large arrays of evanescent couplers can be laid out for performing matrix operations, including matrix multiplication, directly on the optical signals.

Since the values of n, and hence also of (1-n), is always a fractional number between 0 and 1, the evanescent coupler has an attenuating character to its operation. It is for this reason that it is not quite ready for taking over in photonics the switching-amplifier role that the transistor plays in electronics, and the ability to build large CPUs from circuit schematics.

The other structures, such as the MQW, too require more energy at the control input than there is in the controlled signal line, and so are not ready to become the optical equivalent of the transistor, either. However, Lukin built on an idea by Zayats, to use surface plasmons as switching devices (NS, 21-Jul-2007, p28). When set up in the right way, single photons in the control beam are enough to change the properties of the plasmon for the passing signal beam.

Meanwhile, Poustie (Photonics Spectra, Aug-2007, p62) has developed a range of Semiconductor Optical Amplifiers (SOA) that use semiconductor lasers in their role as light amplifiers rather than light oscillators (see elsewhere on the internet for the story of the LOSER verses the LASER). These can be used in a variety of ways, either to use the amplitude of the control signal to alter that of the other signal (cross-gain modulation), or to use the phase of the control signal to alter that of the other (cross-phase modulation), or to perform four-wave mixing or non-linear polarisation rotation. These, of course, require energy to be supplied from an electrical source, for pumping the laser material; but the point is that the amount of energy in the control signal is three orders of magnitude less than that in the signal that is being controlled (thus making it behave as an amplifier device not unlike the simple discrete transistor).

Optical computers will eventually be possible. Hybrid photonic/electronic computers are already being developed. Over the coming years, this will gradually evolve, taking more and more of the processing away from the electronics. Using these to implement convolutional neural nets and matrix multiplication could dramatically reduce the power needs and remove the most recent barrier to keeping to Moore's law (NS, 24-Mar-2018, p6). Artificial neural nets (ANN) can be used to support conventional arithmetic and logic functions, allowing a neuromorphic computer to be implemented (NS, 02-Apr-2022, p9).

DNA Computers

Proposals have been made for DNA logic gates, and ways of interconnecting them to implement turing machines. One exercise was for a DNA system to solve the travelling salesman problem (NS, 06-Nov-2004, p21). DNA logic gates have been demonstrated that are capable of playing noughts and crosses (tic-tac-toe) (NS, 21-May-2022, p16; NS, 23-Aug-2003, p19). More practically, though, it is better to leave this sort of computation to electronics, and to concentrate on simpler DNA devices that perform functions that electronics is less able to perform: testing for combinations of genes in samples (NS, 05-Jun-2010, p9), delivering drugs in a conditional way (NS, 31-Mar-2018, p9), and distributing therapeutic sequences, inside the patient's body, that judge for themselves if, when and where to perform their operation (NS, 05-Aug-2006, p24). A molecular computer has been demonstrated (NS, 09-Jul-2022, p12) that, rather than processing kinesins inside microtubule hardware, has microtubules flowing through the kinesins, thereby distributing the parallel co�putation.

However, DNA logic can be taken much step further (NS, 07-Jul-2007, p6). Systems biology can take genetic engineering right down to the level of bio-hacking, which is akin to the way that hobbyists and engineers took computing away from its IBM-dominated image in the 1970s (NS, 20-May-2006, p43), and carrying with it the threat of bio-terrorism (NS, 18-Mar-2017, p5).

The search for the "minimum gene set" that can support life (NS, 31-May-2003, p28) can be conducted top-down (disabling non-essential genes, one by one, from a functioning organism), or bottom-up (adding essential genes one by one, until the completely new organism starts to function). The top-down approach has been reported (NS, 29-May-2010, p6; NS, 12-Jul-2014, p23; NS, 02-Apr-2016, p6) along with 6 chromosomes of yeast, soon to be achieved for all 16 of its chromosomes (NS, 18-Mar-2017, p16). Studies have shown how adaptable organisms are to the ad hoc introduction of coding for novel proteins (NS, 13-May-2017, p12).

A programming language, Cello, has been developed (NS, 09-Apr-2016, p16) based on the hardware description language, Verilog, allowing designers to specify the function of a DNA circuit in shorthand, and convert it into a DNA wiring diagram ready for a DNA synthesiser.

The new genes do not have to keep to the so-called "universal genetic code" (summarised in the table below) in which 20 amino acids have a standard mapping to the 64 possible triple-nucleotide codons using the four nucleic acid bases (NS, 30-Aug-2003, p35), and despite the intriguing numerical properties that it exhibits, based on the number 37 (NS, 20-Dec-2014, p61) albeit with more to do with properties of multiples of 9 (remainder 1) than of amino acids. One possibility is to use one of the duplicate codons to code for a new amino acid. This can only be done, though, if all instances of the codon in the existing DNA are first substituted over to one of the synonym codons (NS, 14-Jun-2008, p6), and successfully demonstrated using what had been the UAA stop codon (NS, 26-Oct-2013, p8) and more completely on the UAG codon (NS, 27-Aug-2016, p10). This has been achieved for a complete yeast chromosome (NS, 05-Apr-2014, p15). It has even be proposed as a mechanism for making genetic engineering safe (NS, 24-Jan-2015, p11), so that the organism cannot interbreed with wild species, and cannot survive outside the laboratory without a source of the novel amino acids.

Not only can the coding be changed, but also systems can be artificially created using quadruple-nucleotide codons (NS, 20-Feb-2010, p14; 30-Sep-2000, p32), or codons involving novel nucleic acid bases (NS, 12-Jul-2008, p18). Romesberg has created two new complementary artificial letters of the DNA alphabet (d5SICS and dNaM, abbreviated to X and Y) and has shown that they are viable in a living E.coli bacterium (NS, 02-Dec-2017, p9; NS, 10-May-2014, p17), and Benner has created two others (Z and P) and shown that they can be repeated in the DNA sequence as well as the normal A-T and C-G ones (NS, 30-May-2015, p10) and further that they can be made to bind to cancer cells better than the normal bases (NS, 06-Jun-2015, p17). Meanwhile, XNA (xeno nucleic acid, with six completely new bases) has been demonstrated, complete with a system of retroviruses that transfers the information to DNA, and then back to a different XNA molecule (NS, 28-Apr-2012, p10). As well as being a useful tool for investigating possible mechanisms for evolution, these could also prove useful for the engineering of drugs and chemicals (NS, 08-Dec-2018, p40), and machines for performing certain tasks (such as extracting pollutants). Another possibility has been suggested, as a support for quantum computing

Changing this mapping has been likened to changing the instruction set of the CPU on which the operating system is running. This became frozen early in earth's history (NS, 04-Nov-2017, p28), but life, on exoplanets for example, might not even be restricted to the chemistry of carbon and water (NS, 09-Jun-2007, p34). In another vein, there are also indications (NS, 08-Jun-2002, p31) that the operating system of conventional genetic life is stored in the enormous stretches of so-called "junk DNA" (the non-coding introns inbetween the coding exons). The basic instructions in this operating system involve changing the state of finite state machines that have an influence on the way that the rest of the DNA is interpretted. These instructions are now starting to be investigated (NS, 14-Jul-2007, p42).

map